To Sequence or Not To Sequence

by John Turner

Posted on February 14, 2013

In software engineering the concept of queueing is extremely important as it has a significant impact on the efficiency of a system. There exists a massive body of research around queuing within various systems and associated approaches to reducing queuing or the perceived impact of queuing.

I was recently involved in a discussion about queuing in messaging systems and it caused me to consider some of the typical approaches to reducing or eliminating queuing. One such approach is that of using a message sequence and a resequencer. In this approach the order of messages that are queued is important i.e. the order in which they enter the queue must be the same as the order in which they leave the queue.

I’ve found queuing concepts are best explained using an analogy and in this instance a useful one is that of taking a flight (I’m not an expert in airline procedure so forgive any deviations from reality). Lets assume that the procedure is as follows:

Booking Your Ticket

To book a ticket you must log into the website, select the flight, pay the fee and print out the ticket. For simplicity, the airline will allocate tickets using sequential seat numbers that increase from the back of the plane to the front.

Check In

Lets assume that everyone must check-in and the check-in process involves presentation of your passport and ticket. The check-in agent will then take your bags, tag them and wish you a pleasant flight.

Pass Security

Having checked in you proceed to security where you place your belt, shoes, carry on luggage and dignity on the conveyor belt for scanning. You pass through security, get dressed and hurry away embarrassed by the fact that the security personnel now know that you travel with your childhood teddy bear.

Eat Meal

Feeling a bit peckish, you head straight for the food mall were you devour breakfast before heading to the gate.

Board Airplane

When boarding the plane, you are asked to board in the order the tickets were issued. In this way, people are not blocking others from getting to their seat because tickets were issued using increasing sequential numbers from the back to the front of the plane.

In this sequence, order is only important at the first and last steps.

Maintain Strict Order

One way we could make sure order is maintained is by maintaining a first in first out (FIFO) queue. In this way I know that the first person (P1) to book a ticket is the first to check-in, the first to pass security…and the first to board the plane. This would work but it results in queuing at every single step in the sequence.

For example, say P2 (the second person to book a ticket) arrives at check-in first, they will have to wait for P1 before they can check-in. If they arrive at security before P1 they will have to wait again. This sounds ridiculous and indeed it is. However, this is often how order is maintained within a messaging system (though sometime with good reason).

The other way in which strict order could be maintained is to only let a single person enter the queue at a time. This would mean P1 would book a ticket, check-in, pass security, eat a meal and board the airplane before P2 is allowed to book a ticket. In this example this is crazy but in some messaging systems this can work. It all depends on the activity within the chain.

Sequencing and Resequencing

An alternative would be to allow people to check-in in the order in which they arrive at the check-in desk. They can also pass security and eat their meal in the order they arrive thus avoiding any queuing. The removal of queuing makes for more efficient flow of people through the airport.

The price to pay for this is that order must be re-established before people board the airplane.

This theory can be directly applied to messaging systems. The Enterprise Integration Patterns catalogue refers to this pattern as the message sequence (booking your ticket) and resequencing (board the plane) patterns.

Classifying Cloud and Cloud Providers

by John Turner

Posted on February 13, 2013

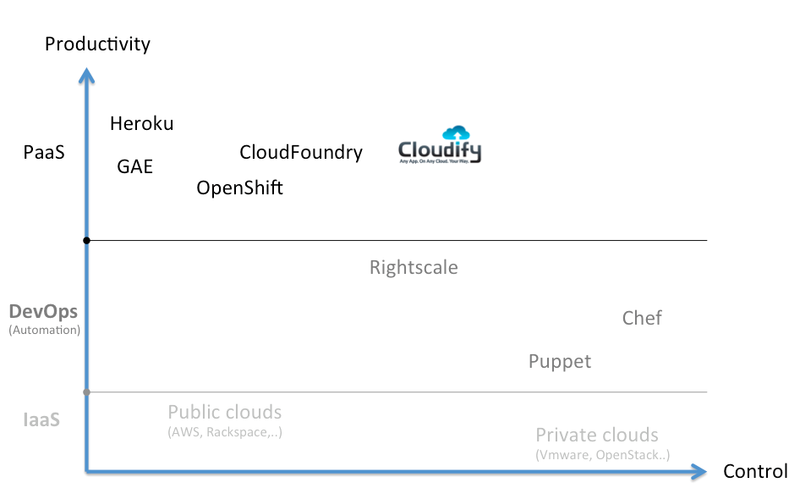

I was reading a post on Mapping the Cloud/PaaS Stack by Nati Shalom (CTO and founder of GigaSpaces and tend to agree with his assertion that a reasonable way to classify the cloud and cloud providers would be to map them against productivity and control.

In this context, productivity is a measure of how efficiently value can be delivered from inception through to realisation (you might think of this as from requirement to production). Control is the ability to specify and manage the details of the compute, network and storage infrastructure, deployment topology, operational management etc.

If we step back for a moment and consider cloud adoption in a typical corporate environment it helps one to understand why this classification is useful.

In a typical corporate environment there exists a substantial legacy burden (I’ll call it burden because this is more often the case). It would be impossible for an organisation to migrate this legacy onto a high productivity and low control cloud as this would require re-engineering those applications. I’ve seen this referred to as the requirement for the application to be cloud native (i.e. aware of the fact that it is executing in a cloud environment).

The second side effect of running in a low control cloud environment is that applications become intrinsically linked to the execution environment and so you often sacrifice any opportunity to maintain cloud portability (i.e. you’re locked in)!

The opposite is to provide a high degree of control. This affords the flexibility to chose between language runtime, application containers, messaging provider etc. and so is better aligned to the legacy environment in which evolution (and divergence) of technology stack has occurred. The cost associated with a high degree of control is typically increased complexity and reduced productivity.

So the nirvana is a platform with a high degree of productivity yet a high degree of control. Of course, given a liberal sprinkling of governance this is exactly what a DevOps environment would provide using tools such as Puppet or Chef. What is interesting is that GigaSpaces’ Cloudify is attempting to build this governance into a platform that ensures some degree of conformity (and thus productivity) while facilitating control within the platform itself (i.e. the platform is open to extension). What I also found compelling about this approach is that the platform itself uses the tools typically leveraged by DevOps and so facilitates a lower cost of divorce.

With all this said, a useful addition to the classification is that of maturity. What I find challenging about all things cloud is the fragmentation and maturity. There is an increasing degree of fragmentation as providers sell their own vision of cloud and thus cloud as a whole lacks maturity. As a consumer of cloud services (either private, public or public/private) it is therefore necessary to ensure that appropriate abstraction is employed to facilitate divorce from your chosen provider should the industry make an abrupt change of direction.

Using Graph Theory & Graph Databases to Understand User Intent

by John Turner

Posted on February 12, 2013

I was in London recently at the Cloud Expo Europe and had the opportunity to attend the Neo4j User Group meetup hosted at Skills Matter. Michael Cutler of Tumra described how they used graph based natural language processing algorithms to understand user intent.

Michael’s aim was to enable enhanced social TV experiences and direct users to the content that interests them. He would achieve this through applying natural language processing, graph theory and machine learning. The presentation walked through the thought process and associated development that delivered a prototype in a few weeks.

The first attempt was to implement a named entity recognition algorithm. Essentially, they downloaded the Wikipedia data in RDF format from DBpedia and stored this data in Hadoop distributed File System (HDFS). They used an N-Gram model supplemented with Apache Lucene for entity matching. What they got was a matching algorithm the was unable to distinguish between different entities of the same name.

The second attempt added disambiguation. MapReduce was used to extract entities from HDFS and insert them into Neo4J (a graph database) which stored entities as nodes and relationships as edges. Graph algorithms were then used to interrogate the connections. Additional context was provided when querying the data thus returning more accurate results (for example, searching for David Cameron in the context of the Euro would find the David Cameron most closely associated with the Euro). This returned more accurate results but searching the 250 million nodes and 4 billion edges was horrendously inefficient.

In search for more efficient queries, the third attempt removed entities that were not people, places or concepts. But there were still 10’s of millions of entities and billions of connections. So, rather than retaining individual connections the set of connections between entities were aggregated and the remaining connection weighted (the weight being the number of original connections). By dividing 1 by the weight a cost was derived with a low cost indicating strong association between entities. Queries were now taking seconds.

The forth attempt allowed this solution to be applied to live news feeds. A caching solution was implemented and simple predictors were used to estimate the likelihood of entities occurring. A ‘probabilistic context’ was maintained that retained recently returned entities and these were returned from cache while the overall context of the news feed remained the same. Bayes’ Rule was used to derive the relative probability of the entity being the same. This resulted in average query performance of 35ms.

The fifth and final attempt added support for multiple languages by relating language to concepts and operating on the underlying concepts. The example used was the concept of the colour red.

Thanks to Michael for a very interesting and informative talk. If you found this interesting, Skills Matter have posted a podcast of Using Graph Theory & Graph Databases to understand User Intent

As a side note, Skills Matter host a number of excellent user groups and evening events. Next time you are in the area check out what’s on and drop by. They are a really friendly bunch who are involved in all manner of interesting projects.

Cloudera Essentials for Apache Hadoop

by John Turner

Posted on February 11, 2013

It has been interesting to see a number of companies (such as Cloudera and Hortonworks) take on the mantle of providing enterprise services for Hadoop and its ecosystem. These services tend to include a certified Hadoop bundle, consultancy, support and training.

We have also seen a number of hardware vendors and cloud providers provide offerings such as the reference architecture from Dell, HP, IBM and Amazon.

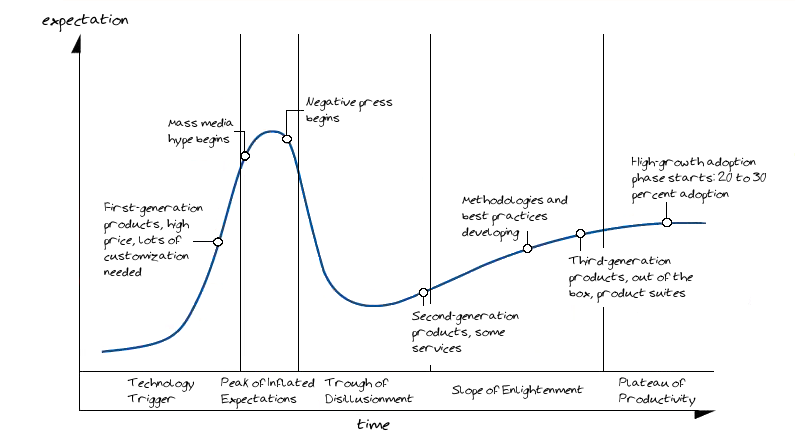

Importantly for Hadoop, this will help drive its maturity and adoption. In 2012, Gartner suggested that Hadoop was past the peak of inflated expectations and sliding toward the ‘Trough of Disillusionment’. While there are areas were adoption is ahead on the curve I would tend to agree with Gartner’s assertion.

The activities these companies engage in is increasing the awareness of Hadoop and the type of problems it can address. To this end, Cloudera created an excellent set of webinars called ‘Cloudera Essentials for Hadoop’ which delivers a comprehensive introduction to Hadoop in the form of a 6 part series.

Read MoreShould we Implement UEFA Financial Fair Play in IT

by John Turner

Posted on February 10, 2013

It has been very interesting to watch the clubs of the Premier League (EPL) lobby for and against the proposed Financial Fair Playhttp://www.uefa.com/uefa/footballfirst/protectingthegame/financialfairplay/index.html) (FFP) rules. The FFP rules attempt to set out a budgetary framework that aims to ensure that football clubs align their spending and income.

Having had a number of recent high profile bankruptcies this would appear to be a no-brainer for the EPL. Bankrupt clubs have a widespread impact on the club, supporters, local economy and the league as a whole. So the question is, why the need for lobbying on this particular debate?

Clubs that are run responsibly have little to fear from FFP as they are probably already adhering to the constraints on spending. In fact, this is a positive step as they will no longer have to compete against clubs that are spending recklessly or those that have wealthy sponsors. On the flip side, clubs with wealthy sponsors who have their investments subsidised would no longer be able spend beyond the income they generate. This is not very appealing to the oil and gas barons who like to tinker with football clubs in their down time. Then there are those clubs who have been promoted and want to significantly invest in order that they have a chance of remaining in the league.

But how is this relevant to IT? I would say that all this is directly relevant. IT companies engage in the same competition for talent as the EPL clubs and they often do so by offering better financial rewards to the players.

So for those companies who do not have the finances (or are too responsible) to attract talent by offering ever increasing salaries and bonuses how can you compete?

Arsenal compete by offering a strong sense of community. They have an established culture that extends trust to younger and less established players. Young players get the opportunity to grow faster than they might at clubs that spend big on transfers. This culture permeates the club from the board through the manager and to the players themselves.

Liverpool offer players the opportunity to become involved in an interesting project that has a clear vision for transforming the club on and off the field. Players can be excited about the changes in transfer policies, playing style and restoring the club to the pinnacle of European football.

What these two examples teach us is that if you are not Google, Amazon or Apple you can still compete for talent by offering something that is more compelling and sustainable than money alone.

Translating this to the IT industry companies need could consider:

- Find ways to increase the talent pool by supporting initiatives such as CoderDojo.

- Investing in the grass roots (graduates) and focus on their growth. Support alternative routes into IT and get involved with universities to ensure that they are imparting relevant skills and knowledge to their students.

- Facilitate progression where people demonstrate out of band skills and expertise. All to often companies attach too much significance to tenure or years of experience when developing the bench for senior positions.

- Be ambitious when considering what projects to undertake and trust your team to deliver. Value their feedback and allow them to take ownership of your projects.

- Allow your team to own their workspace. Want bean bags, sure…want to invite their friends over to talk tech, no problem…prefer Linux to Windows, we’ll find a way. Whatever it is you should be as open as health and safety or confidentiality allows.

- Support the things your team are interested in even if this is not directly relevant to your company (today). IT teams are really inventive and often they will see applications within your company that you cannot see. Hack days and 20% time were designed with just this in mind.

- Remove (or hide) the things that frustrate your team such as unnecessary bureaucracy.

I could go on but I’ll stop now as I would never be able to come up with a definitive list. Below are some other resources who have already considered the same problem.

- Programmer Nesting Rituals

- Tapping Top Young Talent

- Top Ten Reasons Why Large Companies Fail To Keep Their Best Talent

What non-financial benefits do you find compelling?